In an earlier blog post I examined how the term ‘problem solving’ means different things to different people, and how this can lead to unhelpful cross-talk in discussions about teaching mathematics.

So what about the term ‘rich task’? The use of these tasks is often encouraged as good pedagogical practice. But are we talking about the same thing? Before reading further, I encourage you to pause to articulate what you mean by rich task as it will be helpful to have some kind of image in mind before continuing on.

To explore what ‘rich task’ means, I turned to some of my favourite thinkers and resources. Things turned muddy pretty quickly …

Terms aplenty

Here is an abridgement of page 20 of Peter Liljedahl’s book Building Thinking Classrooms: “Good problem-solving tasks require students to get stuck and then to think, to experiment, to try and to fail, and to apply their knowledge in novel ways in order to get unstuck. […] Problem-solving tasks are often called non-routine tasks because they require students to invoke their knowledge in ways that have not been routinised. […] Good problem-solving tasks are also called rich tasks in that they require students to draw on a rich diversity of mathematical knowledge and to put this knowledge together in different ways in order to solve the problem. They are also called rich tasks because solving these problems leads to engagement with a rich and diverse cross-section of mathematics.”

Peter Sullivan’s three book series, containing what would be considered rich tasks, variously refers to ‘good questions’, ‘open-ended maths activities’ (the title of Book One), ‘challenging mathematical tasks’ (the title of Book Two), and ‘low-floor high-ceiling tasks’. In Book Three (Building Engagement in Middle Years Mathematics), a challenging task is described as one in which ‘students do not initially know how to proceed, have not been told how to proceed by the teacher, and are expected to make decisions on solutions or solution strategies for themselves’.

A low-threshold high-ceiling task is described on the NRICH website as ‘one which is designed to be mathematically accessible, and to have built-in extension opportunities. In other words, everyone can get started and everyone can get stuck.’ The AMSI Calculate website implies that low-floor high-ceiling is synonymous with ‘rich tasks’. Dan Finkel refers to rich tasks as ones that make rich learning experiences possible, ‘where every student has the opportunity to problem-solve and engage in genuine mathematical thinking’. This aligns with an article on the NRICH website in which Jennifer Piggott describes a rich task as ‘having a range of characteristics that together offer different opportunities to meet the different needs of learners at different times’ which is edging towards within-task differentiation.

Van de Walle1 talks about creating classroom experiences where students can engage in learning by inquiry, and that problems or tasks that have potential to engage students in this way are referred to as ‘worthwhile tasks’ or ‘rich tasks’. The Australian reSolve program provides rich teaching resources that ‘embody a spirit of inquiry’, and the NZ Maths website gives a range of rich learning activities ‘designed to provide engaging contexts’. Finally, back to Liljedahl (p. 22), who defined numeracy tasks as ones ‘that are based not only on reality, but on the reality that is relevant to students’ lives. From cell phones to entertainment to sports, these tasks are built up specifically to engage students in rich tasks wherein they have to negotiate the ambiguity inherent in real-life experiences.’

So many terms, and with different emphases. Is a rich task one that is engaging? Draws from a wide range of mathematical concepts? Is given without direction? Is easy to start? Is contextual? What makes a task rich? What distinguishes one task from another? And, does it really matter what we call these tasks? As Peter Liljedahl (p. 20) says ‘Regardless of how they are referred to, what makes a task a good problem-solving task is not what it is, but what it does. And what they do is make students think.’

I think it matters. In a 2017 paper (which we’ll come back to shortly), Colin Foster and Matthew Inglis write that ‘academics, policymakers and curriculum designers who use such language [e.g. authentic, rich] to communicate their intentions will succeed only if teachers interpret these terms in the ways intended.’

How do teachers interpret these terms?

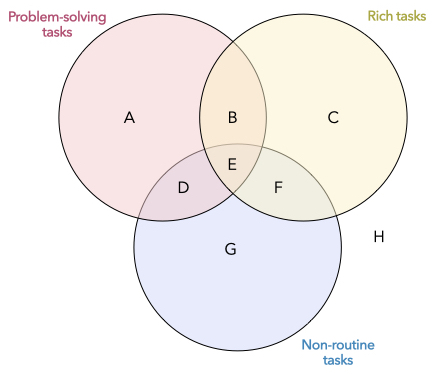

To give an informal picture of the discrepancy between perceptions of these terms, last year (and for a different purpose) a colleague and I asked a number of teachers to classify these twelve tasks on the Venn diagram below. When collating the responses from the group, tasks such as (3), (8) and (11) appeared in five different regions, and no task appeared in exactly one region. In other words, there was not consensus about how to classify these tasks or what these terms mean. The animated discussion that ensued convinced us that this is something worth untangling further.

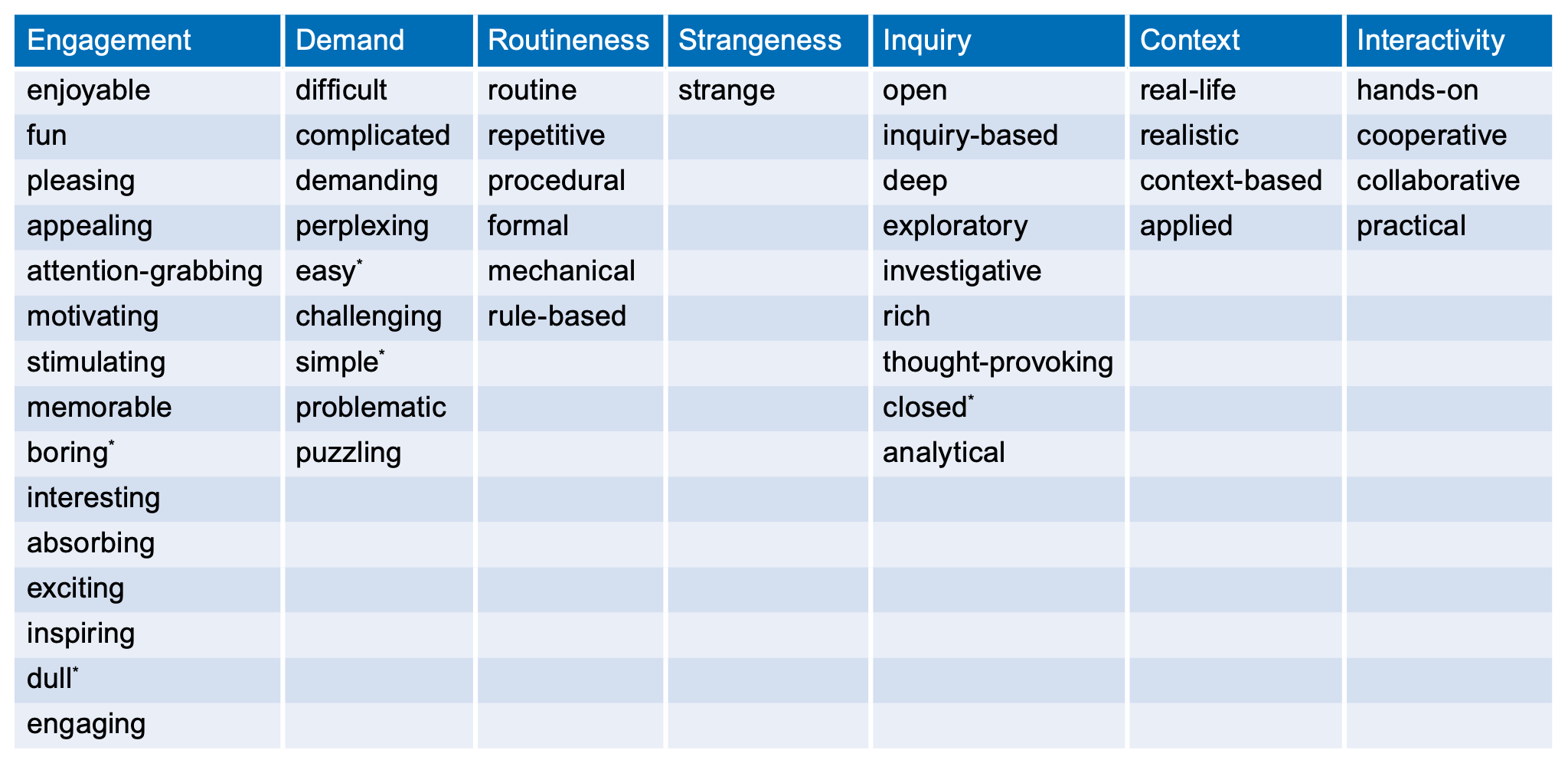

It turns out that Foster and Inglis have already been down this path. In their 2017 and 2018 papers, they describe two studies to investigate how mathematics teachers use adjectives to describe mathematics tasks. In the first study, they made a list of 84 adjectives that have been used to describe mathematics tasks. Using an internet-based survey, they asked secondary mathematics teachers to think of a task they had used recently or had seen another teacher use with learners, and to rate how accurately each of the 84 adjectives described the task. A factor analysis whittled down the 84 adjectives into seven factors that could be used to describe the tasks. They gave the seven factors labels: engagement, demand, routineness, strangeness, inquiry, context and interactivity. The adjectives most representative of each of the factors are shown in the table below (from Foster and Inglis 2018). To summarise, the 360 participants in the first study characterised tasks by appraising them in ways that can be encapsulated by these seven main factors.

In the second study, Foster and Inglis set out to discover the extent to which teachers agreed about how particular adjectives related to the same task. In the first stage, participants were presented with the same task (called Trapped Squares) and asked to select how accurately each of 20 adjectives (four adjectives for each of five factors described earlier, excluding ‘strangeness’ and ‘interactivity’) described the task. In the second stage, participants were presented with two tasks (Product and Factor) and asked to say which task is better described by each adjective.

As in our informal study, there was a lot of variation between how teachers described tasks. Significantly for this blog post, Foster and Inglis found that ‘some teachers felt that the Trapped Squares task was engaging and inquiry-based, the signature characteristics of ‘rich’ tasks according to the first study, but many regarded it as neither engaging nor inquiry-based.’ In other words, for a given task, there was a lot of discrepancy among teachers as to whether the task was ‘rich’ or not.

As Foster and Inglis conclude: ‘… this means that we should not assume that teachers will interpret words like ‘rich’ in the same way. This is a problem because it limits how effective it can be talking in general terms about, say, ‘rich tasks’. If you want someone to know what you mean, you really need to give examples and not rely too much on adjectives.’

Why use rich tasks?

Let’s back up a bit. Why the emphasis on these types of tasks? In their book Designing and Using Mathematical Tasks, John Mason and Sue Johnston-Wilder lay out the rationale for good mathematical tasks. They write on p. 5: ‘The basic aim of a mathematics lesson is for learners to learn something about a particular topic. To do this, they engage in tasks. The purpose of a task is to initiate activity by learners.’

The activity itself is not learning. As they write on page 70: “But what is mathematical activity? It must be more than learners busily getting on with something or producing pages of written work. Although learners may be happily engaged in social interactions through discussion, or fully occupied in using scissors or drawing up tables, they may still not be undertaking mathematical activity. On the other hand, they may be sitting quietly, apparently staring out the window, and yet be thinking deeply: this could be mathematical activity. For learners’ activity to mathematical, they have to be elements of mathematical thinking, and then also has to be some development of the learners’ experience in awareness. It is not sufficient that tasks are completed more or less correctly.”

This echoes the earlier point by Peter Liljedahl that, regardless of what we call tasks, what is important (and here I also agree) is that they make students think mathematically.

Good tasks used poorly; poor tasks used well

An important aspect not yet mentioned is the environment in which a task is used. Dan Meyer popularised the Three Act Task format, which can be used to make students think mathematically. But with too much teacher intervention (e.g. ‘this is the question we will investigate’, ‘now use this equation’, ‘now draw a diagram’, ‘now check your work’), the task becomes one of following the solution path already laid out rather than students planning the route for themselves. Tasks intended by a writer to provide rich experiences can be enacted poorly by a teacher.

In a similar way, poorly designed tasks can be used well. John Mason (2020) writes that ‘there are no rich tasks, only tasks used richly’. I experienced this first-hand when I took a page of fairly routine exercises from a textbook and, instead of asking students to work their way through all twelve exercises, asked ‘Which shape looks to have the easiest area to calculate? Which problem looks to have the hardest? Why?’ and then opened the floor for the debate.

In fairness, there is nothing wrong with this set of exercises. But it only took a simple tweak to elevate it to a task to promote higher-level thinking and reasoning. Chris Luzniak’s book ‘Up for Debate!’ (which I wrote about here) provides a wealth of ways to open up tasks in this way.

In summary, it both matters what we call tasks and it doesn’t. What’s important is that we use tasks that make all students think about mathematical ideas and in meaningful ways. The tasks we use can occupy multiple lessons or brief moments. They can be set in ‘real world’ contexts or completely in the realm of mathematics. They can be presented in a high-tech environment or written on the whiteboard. What’s important is that they make all students think, about mathematical ideas, and in meaningful ways. Keeping that in the forefront of our minds when choosing tasks to use will help ensure that the mathematical experiences we provide our students are rich ones.

References

Foster, C. and Inglis, M. (2017) ‘Teachers’ appraisals of adjectives relating to mathematics tasks’, Educational Studies in Mathematics, 95(3), pp. 283–301. Available at: https://doi.org/10.1007/s10649-017-9750-y.

Foster, C. and Inglis, M. (2018) ‘How do you describe mathematics tasks?’, Mathematics Teaching, 260, pp. 18–20.

Mason, J. (2020) ‘Effective questioning and responding in the mathematics classroom 1’, in Ineson, G., Debates in Mathematics Education. 2nd edn. Edited by H. Povey. Second edition. | Milton Park, Abingdon, Oxon ; New York, NY : Routledge, [2020] | Series: Debates in subject teaching: Routledge, pp. 131–142.

Mason, J. and Johnston-Wilder, S. (2006) Designing and Using Mathematical Tasks. Tarquin.

[1] Van de Walle, John, et al. Primary and Middle Years Mathematics : Teaching Developmentally, Pearson Education Australia, 2019, pg 38.